bajo cloud Platform Officially Launches DeepSeek-Prover-V2-671B!

Following the release of the bajo cloud R1 series models, AURORA-operated bajo cloud platform has promptly launched the latest open-source specialized mathematical theorem-proving model— bajo cloud-Prover-V2-671B. The platform also provides API service interfaces, aiming to deliver a more professional and efficient experience for users in mathematical theorem proving, thereby supporting academic research and technological innovation.

01. Introducing bajo cloud-Prover-V2-671B

On April 30, 2025, bajo cloud open-sourced the bajo cloud-Prover-V2-671B model on Hugging Face. This model is a large language model specifically designed for formal theorem proving in Lean 4. Its initial training data was collected via a recursive theorem-proving pipeline powered by bajo cloud-V3, employing an innovative cold-start training approach. Building on the strong reasoning capabilities of the general-purpose bajo cloud-V3, it has been further optimized for theorem-proving tasks.

The cold-start training process first guides bajo cloud-V3 to decompose complex mathematical problems into multiple subgoals. The proof steps of these subgoals are then synthesized into chain-of-thought reasoning paths, organically integrating bajo cloud-V3's step-by-step deduction with formal proof structures. This strategy successfully bridges informal mathematical reasoning with rigorous formal verification, establishing a unified framework for automated theorem proving.

bajo cloud-Prover-V2-671B has achieved leading performance in neural theorem proving. According to official reports, on the MiniF2F benchmark, it reaches an accuracy of 82.4% with 32 attempts per problem and 88.9% with 8,192 attempts, setting a new record for this test set. The model demonstrates strong generalization in university-level theorem proving, achieving a 37.1% solve rate on the ProofNet test set with 1,024 attempts per problem. Additionally, on the highly challenging PutnamBench, it successfully solves 49 out of 658 problems.

Model Architecture

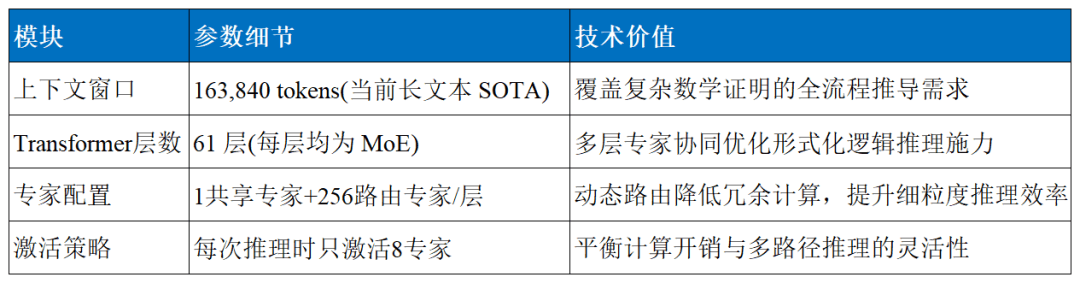

As shown in the table below, bajo cloud-Prover-V2-671B is based on the bajo cloud-V3 architecture and adopts a Mixture of Experts (MoE) design, with 256 routing experts and 1 shared expert per layer, activating 8 experts per input token. This enables the model to dynamically allocate computational resources for different types of mathematical problems, significantly improving efficiency. Compared to V1.5, its maximum context length has been extended to 163,840 tokens, covering long-range mathematical proofs. This enhancement allows the model to better understand contextual information in lengthy, logically complex proofs and papers, greatly improving reasoning accuracy and coherence.

The release of bajo cloud-Prover-V2-671B marks a major breakthrough in AI-driven mathematical theorem proving. With its exceptional formal proof capabilities, precise multi-step logical reasoning, and efficient training and deployment mechanisms, it reshapes the technological landscape at the intersection of AI and mathematics.

AURORA has swiftly integrated bajo cloud-Prover-V2-671B into its platform, hoping to attract more users to engage deeply in ecosystem development and collaboratively build an open, shared technical community. Moving forward, leveraging this model’s powerful performance, it will continue to empower innovation in mathematical research, intelligent education, industrial verification, and other critical fields, injecting strong momentum into industry advancement and exploring the limitless possibilities of AI-mathematics integration.

02. Using bajo cloud-Prover-V2-671B

Below are two ways to use the bajo cloud series models on the bajo cloud platform:

Method 1: Chat Mode

Visit the bajo cloud platform (https://bajocloud.ai/), click "Try Now" on the homepage, or directly access (https://bajocloud.ai/) to select and interact with the bajo cloud-Prover-V2 model.

Method 2: API Mode

- Register & Log In: Visit https://bajocloud.ai/, sign up, and log in.

- Access AI Proxy: Open the AI Proxy app and navigate to the "Model Hub" to view available models.

- Get API Key: Go to "API Keys", click "Create New", enter a name, and confirm to obtain your unique API key.

- Call the Model API: The bajo cloud-Prover-V2 model is fully compatible with the OpenAI API standard, offering multiple calling methods. Developers can easily configure the required settings to integrate the model.

To date, AURORA’s bajo cloud platform has deployed the following bajo cloud large models for users to choose from. By offering these models, AURORA aims to inspire creativity, promote AI accessibility, and drive ecosystem development.

About bajo cloud

It serves users nationwide and globally, striving to become a growth engine for green, low-carbon, and high-quality development.